Chao Ma (Stanford) – Implicit Bias of Optimization Algorithms and Generalization of Over-Parameterized Neural Networks

Modern neural networks are usually over-parameterized—the number of parameters exceeds the number of training data. In this case the loss function tends to have many (or even infinite) global minima, which imposes a challenge of minima selection on optimization algorithms besides the convergence. Specifically, when training a neural network, the algorithm not only has to find a global minimum, but also needs to select minima with good generalization among many others. We study the mechanisms that facilitate global minima selection of optimization algorithms, as well as its connection with good generalization performance. First, with a linear stability theory, we show that stochastic gradient descent (SGD) favors global minima with flat and uniform landscape. Then, we build a theoretical connection of flatness and generalization performance based on a special multiplicative structure of neural networks. Connecting the two results, we develop generalization bounds for neural networks trained by SGD. We also study the behavior of optimization algorithms around manifold of minima and characterize their explorations from one minimum to another.

Bio: I am currently a Szegö Assistant Professor in the Department of Mathematics at Stanford University. My research interests lie in the theory and application of machine learning. I am especially interested in theoretically understanding the optimization behavior of deep neural networks, e.g. the implicit bias of optimization algorithms, as well as their connection with generalization. My mentor at Stanford is Professor Lexing Ying.

Bio: I am currently a Szegö Assistant Professor in the Department of Mathematics at Stanford University. My research interests lie in the theory and application of machine learning. I am especially interested in theoretically understanding the optimization behavior of deep neural networks, e.g. the implicit bias of optimization algorithms, as well as their connection with generalization. My mentor at Stanford is Professor Lexing Ying.

Before joining Stanford, I obtained my PhD from the Program in Applied and Computational Mathematics at Princeton University, under the supervision of Professor Weinan E. I received my bachelor’s degree from the school of mathematical Science at Peking University.

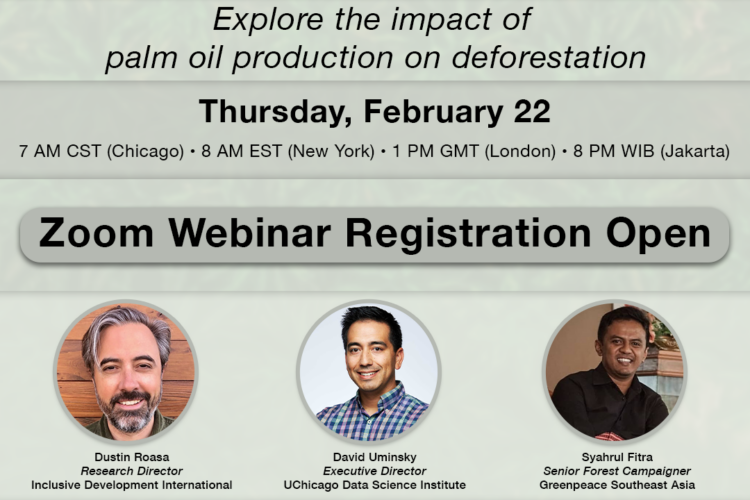

Navigating the Data Science Job Market: Insights and Opportunities

Inderjit S. Dhillon (The University of Texas at Austin) – MatFormer: Nested Transformer for Elastic Inference

Brandon Stewart (Princeton University) – Getting Inference Right with LLM Annotations in the Social Sciences