Feb

27

2023

Past EventFeb 27, 2023

Frederic Koehler (Stanford)

February 27, 2023 4:30 PM – 5:30 PM

Jones 303

Talk Details TBD

Bio: I am currently at Stanford University as a Motwani Postdoctoral Fellow. Right before, I was a research fellow in UC Berkeley’s Simons Institute in the Program on Computational Complexity of Statistical Inference. I received my PHD in Mathematics and Statistics from MIT, where I was coadvised by Ankur Moitra and Elchanan Mossel, and before that I received my undergraduate degree in Mathematics at Princeton University. My current research interests include computational learning theory and related topics: probability theory, high-dimensional statistics, optimization, related aspects of statistical physics, etc. In particular, I am very interested in learning and inference in graphical models.

Bio: I am currently at Stanford University as a Motwani Postdoctoral Fellow. Right before, I was a research fellow in UC Berkeley’s Simons Institute in the Program on Computational Complexity of Statistical Inference. I received my PHD in Mathematics and Statistics from MIT, where I was coadvised by Ankur Moitra and Elchanan Mossel, and before that I received my undergraduate degree in Mathematics at Princeton University. My current research interests include computational learning theory and related topics: probability theory, high-dimensional statistics, optimization, related aspects of statistical physics, etc. In particular, I am very interested in learning and inference in graphical models.

Apr

8

Past EventApr 08, 2024

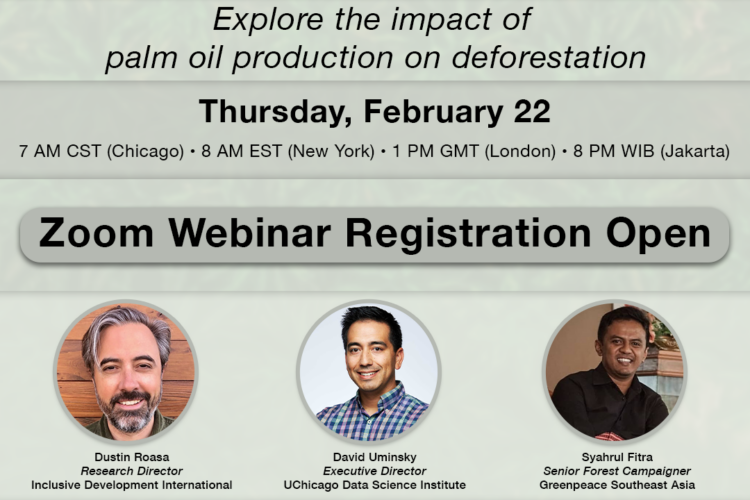

Navigating the Data Science Job Market: Insights and Opportunities

Apr

5

Upcoming EventApr 05, 2024

Inderjit S. Dhillon (The University of Texas at Austin) – MatFormer: Nested Transformer for Elastic Inference

May

2

Upcoming EventMay 02, 2024

Brandon Stewart (Princeton University) – Getting Inference Right with LLM Annotations in the Social Sciences

Feb

22

Upcoming EventFeb 22, 2024