Oct

28

2022

Past EventOct 28, 2022

Human + AI Conference

October 28, 2022 9:00 AM – 5:30 PM

John Crerar Library, Room 390

AI has achieved impressive success in a wide variety of domains, ranging from medical diagnosis to creative image generation. This success provides rich opportunities for AI to address important societal challenges, but there are also growing concerns about the bias and harm that AI systems may cause. This conference brings together diverse perspectives to think about the best way for AI to fit into society and how to develop the best AI for humans.

View agenda and speaker information below.

The organizing committee for the Human + AI Conference is Chenhao Tan, Sendhil Mullainathan, and James Evans. This event is made possible by generous support of the Stevanovich Center for Financial Mathematics.

Agenda

Friday, October 28, 2022

9:00 am–9:05 am

Welcome

9:05 am–9:50 am

Some Very Human Challenges in Responsible AI (Or Why My Research Trajectory Took a Surprising Turn)

Jenn Wortman Vaughan, Senior Principal Researcher, Microsoft Research, New York City

9:50 am–10:35 am

Aligning Algorithms with Consumers’ Prediction Preferences

Berkeley J. Dietvorst , Associate Professor of Marketing, UChicago Booth School of Business

10:35 am–11:20 am

TBD

Krzysztof Gajos, Gordon McKay Professor of Computer Science, Harvard University

11:20 am–11:40 am

Break

11:40 am–12:30 pm

What do you wish to see in Human+AI (in five years)?

12:30 pm–1:30 pm

Lunch

1:30 pm–2:15 pm

Decision Science in the Age of Augmented Cognition

Daniel Oppenheimer, Professor, Dietrich College of Humanities and Social Sciences, Carnegie Mellon University

2:15 pm–3:00 pm

Toward a Unifying Framework for Combining Complementary Strengths of Humans and ML toward Better Predictive Decision-Making

Hoda Heidari, Assistant Professor, Carnegie Mellon University

3:00 pm–3:15 pm

Break

3:15 pm–4:00 pm

Using Theory, Sensors, and Machines to Quantity the Nature of Social Interactions

Marc Berman, Associate Professor of Psychology, University of Chicago

4:00 pm–4:45 pm

Human + AI interaction in the wild: A case study of a child welfare risk assessment tool

Alexandra Chouldechova, Principal Researcher in the Fairness, Accountability, Transparency and Ethics (FATE) group at Microsoft Research NYC, and the Estella Loomis McCandless Associate Professor of Statistics and Public Policy at Carnegie Mellon University's Heinz College of Information Systems and Public Policy

4:45 pm–5:30 pm

What are the next steps to realize the wishes?

Speakers

Registration

May

7

Upcoming EventMay 07, 2024

PalmWatch How-To: Learn how you can use PalmWatch in your research or reporting

Apr

28

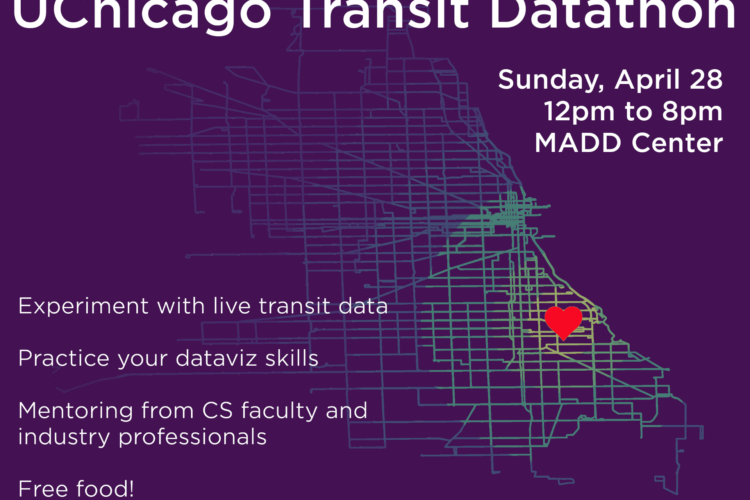

Upcoming EventApr 28, 2024

First Annual UChicago Transit Datathon

Apr

8

Past EventApr 08, 2024

Navigating the Data Science Job Market: Insights and Opportunities

May

6

Upcoming EventMay 06, 2024