Suvrit Sra (MIT) – Geometric Optimization: Old and New

Part of the Autumn 2022 Distinguished Speaker Series. Lunch will be served at noon and the talk will begin at 12:30. To attend via Zoom, please register to receive login instructions.

This talk is about non-Euclidean optimization, primarily (but not only) about problems whose parameters lie on a Riemannian manifold. I will lay particular emphasis on geodesically convex optimization, a vast class of non-convex optimization that remains tractable. I will recall some motivating background and present canonical examples. Starting with our 2016 paper on g-convex optimization, the iteration complexity theory for g-convex optimization has grown substantially: I will summarize some of the recent progress including Riemannian acceleration, and saddle-point problem, Langevin MCMC etc., while noting some key open problems.

Bio: Suvrit Sra is an Associate Professor of EECS at MIT, and a core member of the Laboratory for Information and Decision Systems (LIDS), the Institute for Data, Systems, and Society (IDSS), as well as a member of MIT-ML and Statistics groups. He obtained his PhD in Computer Science from the University of Texas at Austin. Before moving to MIT, he was a Senior Research Scientist at the Max Planck Institute for Intelligent Systems, Tübingen, Germany. His research bridges mathematical areas such as differential geometry, matrix analysis, convex analysis, probability theory, and optimization with machine learning. He founded the OPT (Optimization for Machine Learning) series of workshops, held from OPT2008–2017 at the NeurIPS (erstwhile NIPS) conference. He has co-edited a book with the same name (MIT Press, 2011). He is also a co-founder and chief scientist of macro-eyes, an OPT+ML driven startup with a focus on intelligent supply chains.

Bio: Suvrit Sra is an Associate Professor of EECS at MIT, and a core member of the Laboratory for Information and Decision Systems (LIDS), the Institute for Data, Systems, and Society (IDSS), as well as a member of MIT-ML and Statistics groups. He obtained his PhD in Computer Science from the University of Texas at Austin. Before moving to MIT, he was a Senior Research Scientist at the Max Planck Institute for Intelligent Systems, Tübingen, Germany. His research bridges mathematical areas such as differential geometry, matrix analysis, convex analysis, probability theory, and optimization with machine learning. He founded the OPT (Optimization for Machine Learning) series of workshops, held from OPT2008–2017 at the NeurIPS (erstwhile NIPS) conference. He has co-edited a book with the same name (MIT Press, 2011). He is also a co-founder and chief scientist of macro-eyes, an OPT+ML driven startup with a focus on intelligent supply chains.

PalmWatch How-To: Learn how you can use PalmWatch in your research or reporting

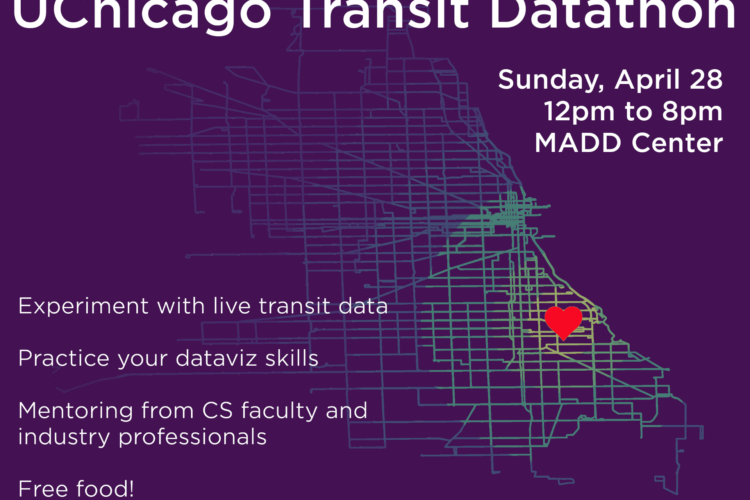

First Annual UChicago Transit Datathon

Navigating the Data Science Job Market: Insights and Opportunities